Research

For us, technology is a living practice – we engage in thinking through making, and we make the things we want to see in the world, grounded in philosophical and anthropological thinking. Our process includes new ways of building AI models, material experiments, critical writing, and collaborative investigations.

We are driven by the idea that humans are complex, that asking the right questions is important, and so is observing people. Some solutions are complex, some are simple; the simpler ones are usually harder. Technology gives us access to data, and humans give us access to insight.

AI Reading Group

A space for collective thinking about human understanding in an age mediated by algorithmic models and technological artefacts. If you are in Athens, feel free to join us in person at Philosophy Machines HQ (contact us for directions). Otherwise join online. All welcome, no need to book.

Last one: 30 Jan 2026

Find notes from past Reading Group sessions here.

Code

Access our open-source code here

Publications

In Despina's 2025 PhD thesis, the algorithmic grid is revisited, inserting affectivity, complexity and material resonance. Through a series of material encounters and their deliberate "kinking" of established patterns, she demonstrates how algorithmic systems might be recrafted from processes of reduction into expansive sites of co-creation and possibility.

This 2025 article in MIT's Leonardo journal presents findings from Kevin's ethnographic fieldwork in Silicon Valley investigating how computers utilize time and how human engineers and designers use and understand time. Common themes across computational and human uses of time include the quantification and calculation of time as a finite resource; the imbalance of clock time versus “event time” and linear versus cyclical time; the perception and reality of the acceleration and fragmentation of time; and an approach to programmability, which is applied to time, people, and the world as a whole.

Written in 2022 during peak corporate empathy discourse, this piece anticipated AI ethics' central blind spot: empathy can be faked and maintains power asymmetries, while trust demands bilateral vulnerability and time. Eight years later, the distinction matters more than ever—trust isn't a pattern recognition problem but a relational practice that unfolds in duration and cannot be automated.

This work, presented at the 2022 Royal Anthropological Society conference, is motivated by two drivers of change in AI: a need for ethnographic approaches, and increasing technical development pushing AI into the edges of the network. Kevin considers AI as performance instead of science. Embedded in small devices, each providing partial perspectives and incomplete knowledge, shifts attention from functionality to actors and actions, and is thus suited to ethnographic study. Conversely, AI becomes a participant-observer of human cultures itself.

In this Keynote at the 2019 Tech Meets Design conference, Despina draws inspiration from biology, cybernetics, craft practices and the Bauhaus, to reposition design as a material practice that forces us to think about the type of shared futures we want to inhabit.

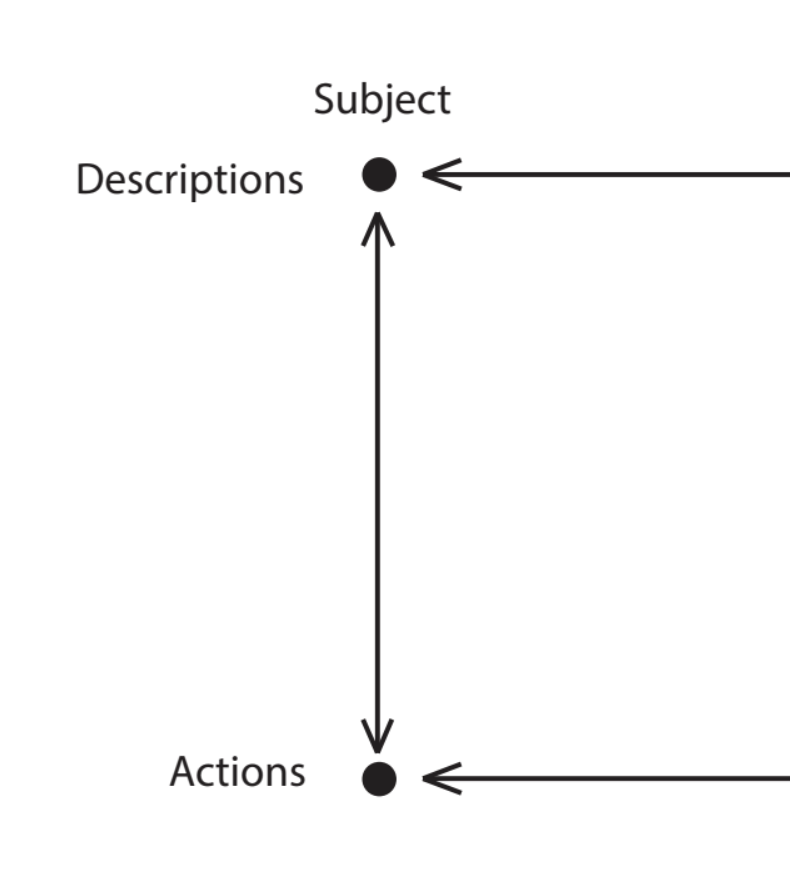

In this paper presented at the 2019 ACM SIGCHI conference, Kevin describes a framework for machine learning based on conversation, which positions human and machine participants in a learning system with feedback mechanisms separated into a level of actions and a level of descriptions. This social model is based on theories of human learning and systems theory, and is intended for programmers, domain experts, users and non-experts to make sense of human-machine learning systems. It addresses ethical dimensions of such a system such as trust and control, as ML systems become rapidly distributed and networked globally.